Photos Ottoman Bed 2025-03-24

March 24, 2025

The assembly was generally pretty straightforward, but a bit of a slog!

March 24, 2025

The assembly was generally pretty straightforward, but a bit of a slog!

March 19, 2025

This week in ollama-buddy updates, I have been continuing on the busy bee side of things.

The headlines are :

fabric prompts presets - mainly as I thought generally user curated prompts was a pretty cool idea, and now I have system prompts implemented it seemed like a perfect fit. All I needed to do was to pull the patterns directory and then parse accordingly, of course Emacs is good at this.dired!ollama API - includes model management, so pulling, stopping, deleting and more!sexp usual keybindings and then load back in to the variable.

March 14, 2025

More improvements to ollama-buddy https://github.com/captainflasmr/ollama-buddy

The main addition is that of system prompts, which allows setting the general tone and guidance of the overall chat. Currently the system prompt can be set at any time and turned on and off but I think to enhance my model/command for each menu item concept, I could also add a :system property to the menu alist definition to allow even tighter control of a menu action to prompt response.

March 11, 2025

Continuing the development of my local ollama LLM client called ollama-buddy…

https://github.com/captainflasmr/ollama-buddy

The basic functionality, I think, is now there (and now literally zero configuration required). If a default model isn't set I just pick the first one, so LLM chat can take place immediately.

March 7, 2025

I've been a busy little bee in the last few days, so quite a few improvements to ollama-buddy, my Emacs LLM ollama client, they are listed below:

March 2, 2025

Some improvements to my ollama LLM package…

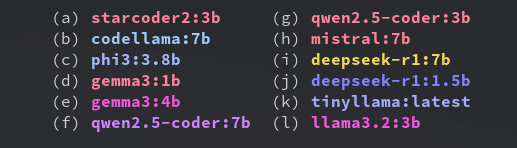

With the new multishot mode, you can now send a prompt to multiple models in sequence, and compare their responses, the results are also available in named registers.

February 26, 2025

Seems to be a common post at the moment, so I thought I would quickly put out there how I updated to Emacs 30.1.

I use an Arch spin called Garuda, running SwayWM, so as its on wayland, this for me is simple, just update the system using pacman -Syu and emacs-wayland will pull in 30.1 automatically!

February 24, 2025

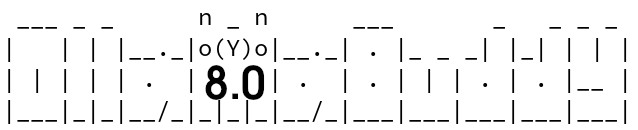

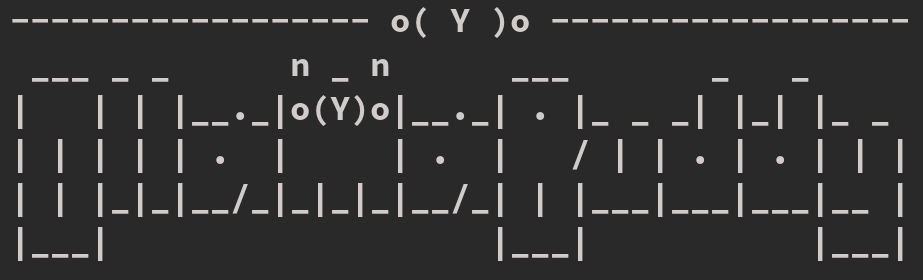

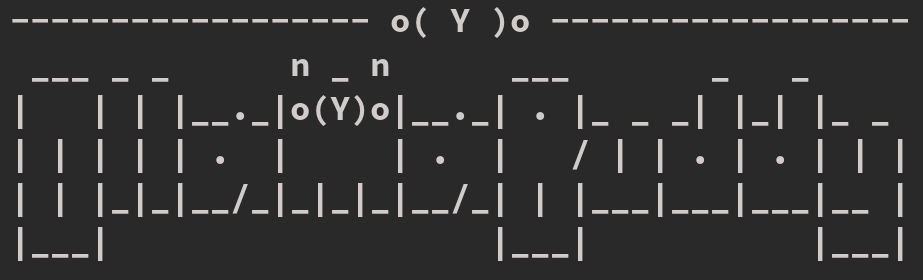

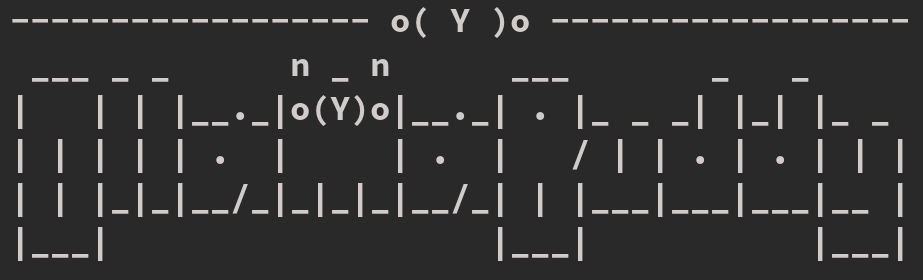

___ _ _ n _ n ___ _ _ _ _

| | | |__._|o(Y)o|__._| . |_ _ _| |_| | | |

| | | | | . | | . | . | | | . | . |__ |

|___|_|_|__/_|_|_|_|__/_|___|___|___|___|___|

https://github.com/captainflasmr/ollama-buddy

A friendly Emacs interface for interacting with Ollama models. This package provides a convenient way to integrate Ollama’s local LLM capabilities directly into your Emacs workflow with little or no configuration required.

Latest improvements:

ollama-buddy-menu(use-package ollama-buddy

:bind ("C-c o" . ollama-buddy-menu))

(use-package ollama-buddy

:bind ("C-c o" . ollama-buddy-menu)

:custom ollama-buddy-default-model "llama3.2:1b")

February 21, 2025

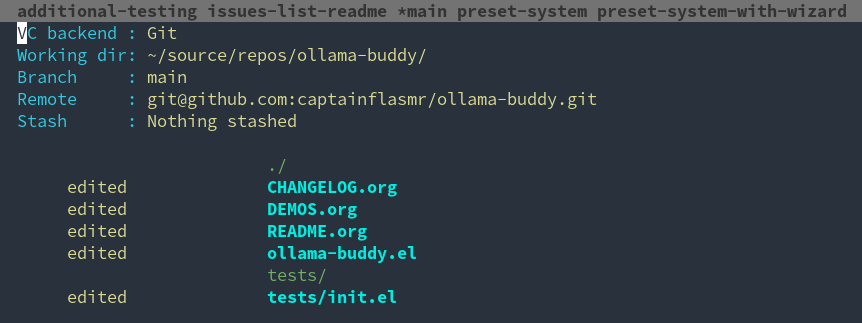

I am currently using vc-mode for my source code configuration needs. I wouldn’t call myself a die-hard vc-mode user, (at least not yet!). To earn that title, I think I would need years of experience with it and scoffing at this newfangled thing they call magit, while my muscle memory recoils at the thought of reading and interacting with a transient menu!

February 7, 2025

I have been playing around with local LLMs recently through ollama and decided to create the basis for an Emacs package to focus on interfacing to ollama specifically. My idea here is to implement something very minimal and as light-weight as possible and that could be run out-of-the-ollamabox with no configuration (obviously the ollama server just needs to be running). I have a deeper dive into my overall design thoughts and decisions in the github README and there are some simple demos:"hello"

January 30, 2025

In my continuing quest to replace all my external use packages with my own elisp versions so I can still follow my current workflow on an air-gapped system, I would like to replace a single function I use often from embark

embark allows an action in a certain context, and there are a lot of actions to choose from, but I found I was generally using very few, so to remove my reliance on embark I think I can implement these actions myself.

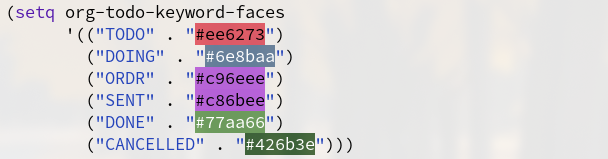

January 25, 2025

In this post, as part of my ongoing mission to replace all (or as many as possible) external packages with pure elisp, I’ll demonstrate how to implement a lightweight alternative to the popular rainbow-mode package, which highlights hex colour codes in all their vibrant glory. I use this quite often, especially when "ricing" my tiling window setup.